- Print

- DarkLight

- PDF

Using Azure Event Hubs Capture to Store Long-term Events

- Print

- DarkLight

- PDF

Azure Event Hubs include a built-in capability to retain events for 1 to 7 days. This duration generally addresses operational requirements, when you need to replay an event.

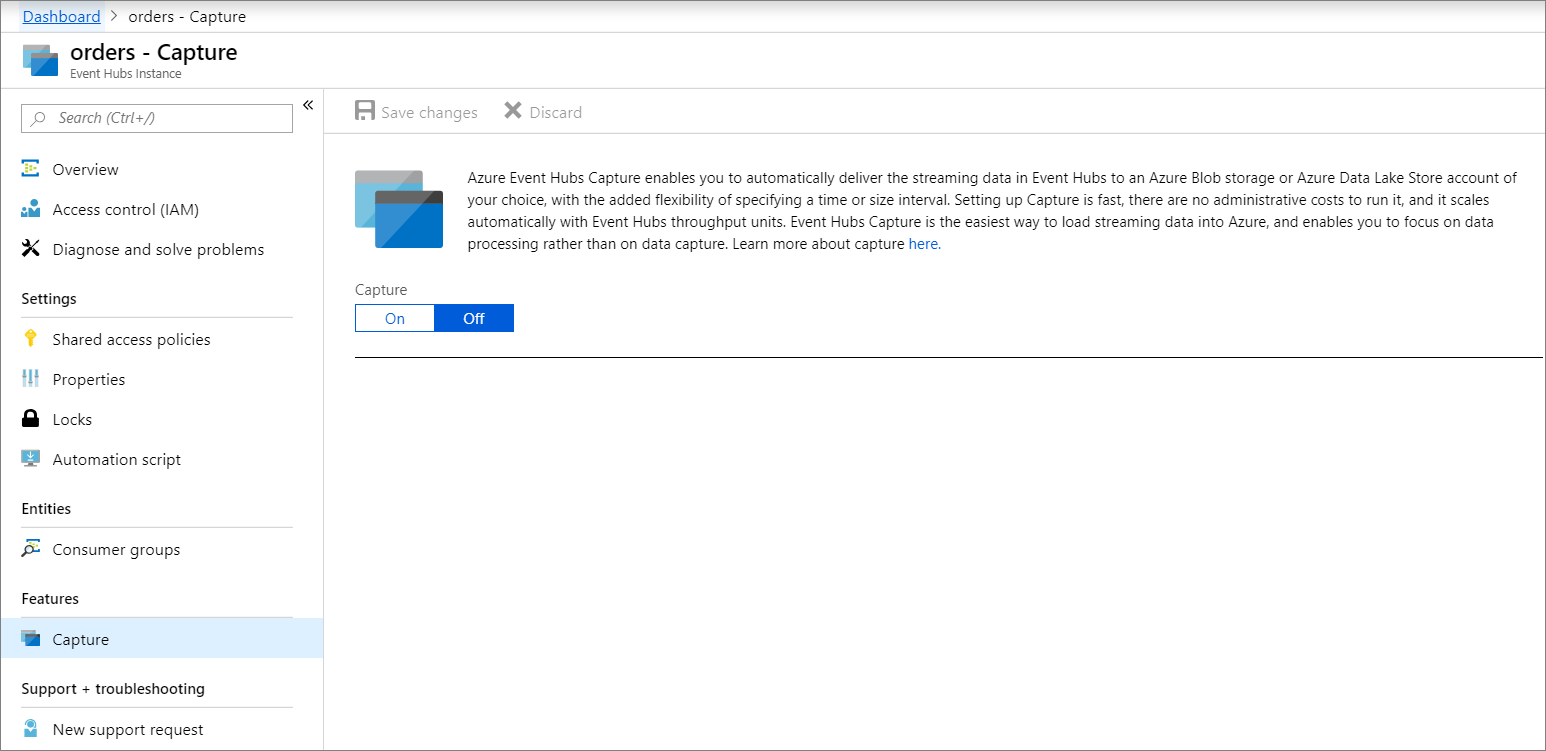

If you need to store data for more than 7 days, a feature of Azure Event Hubs called Capture is the preferred solution for longer-term storage.

When configuring Capture, there are two locations where this information can be stored: Azure Blob Storage or Azure Data Lake Store account. This allows organizations to keep this information for archival purposes, but also unlocks advanced analytics scenarios such as supporting machine learning or big data solutions.

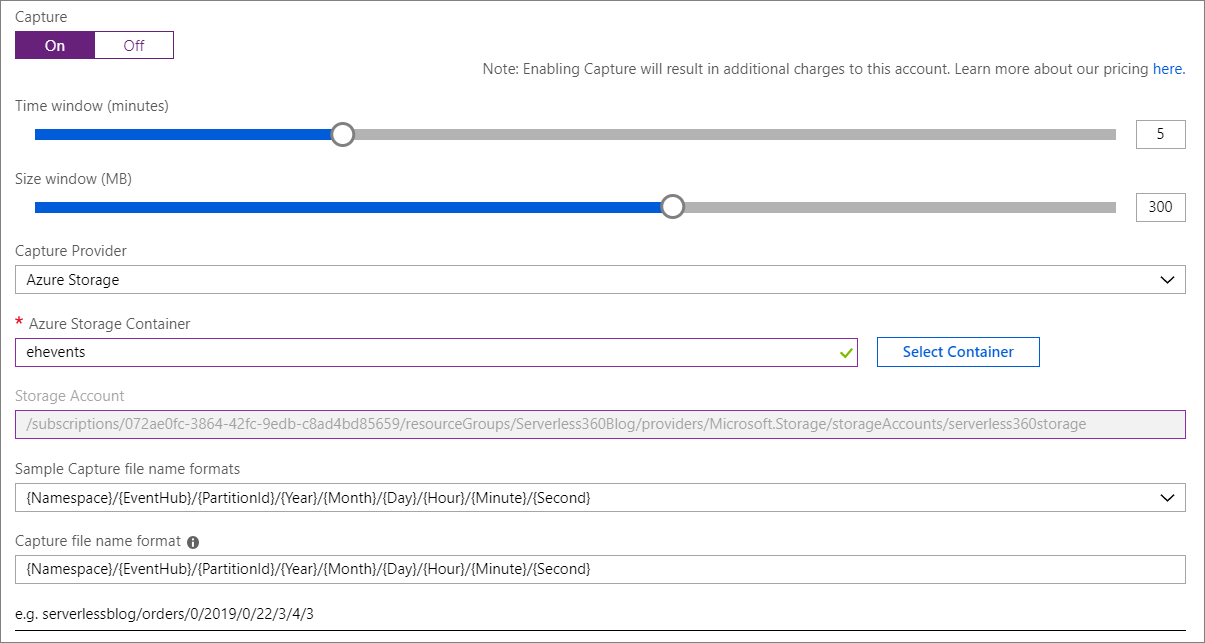

We now need to make some decisions, including the Time window and Size window settings. These settings represent when the next ‘event capture’ will take place, as determined when either the Time window or Size window is satisfied, with the first event winning.

In addition, we have the ability to choose our Capture Provider. Within our scenario, we will choose Azure Storage. When we select Azure Storage, we need to provide an Azure Storage Container. We also have the ability to specify file name formats based upon our requirements.

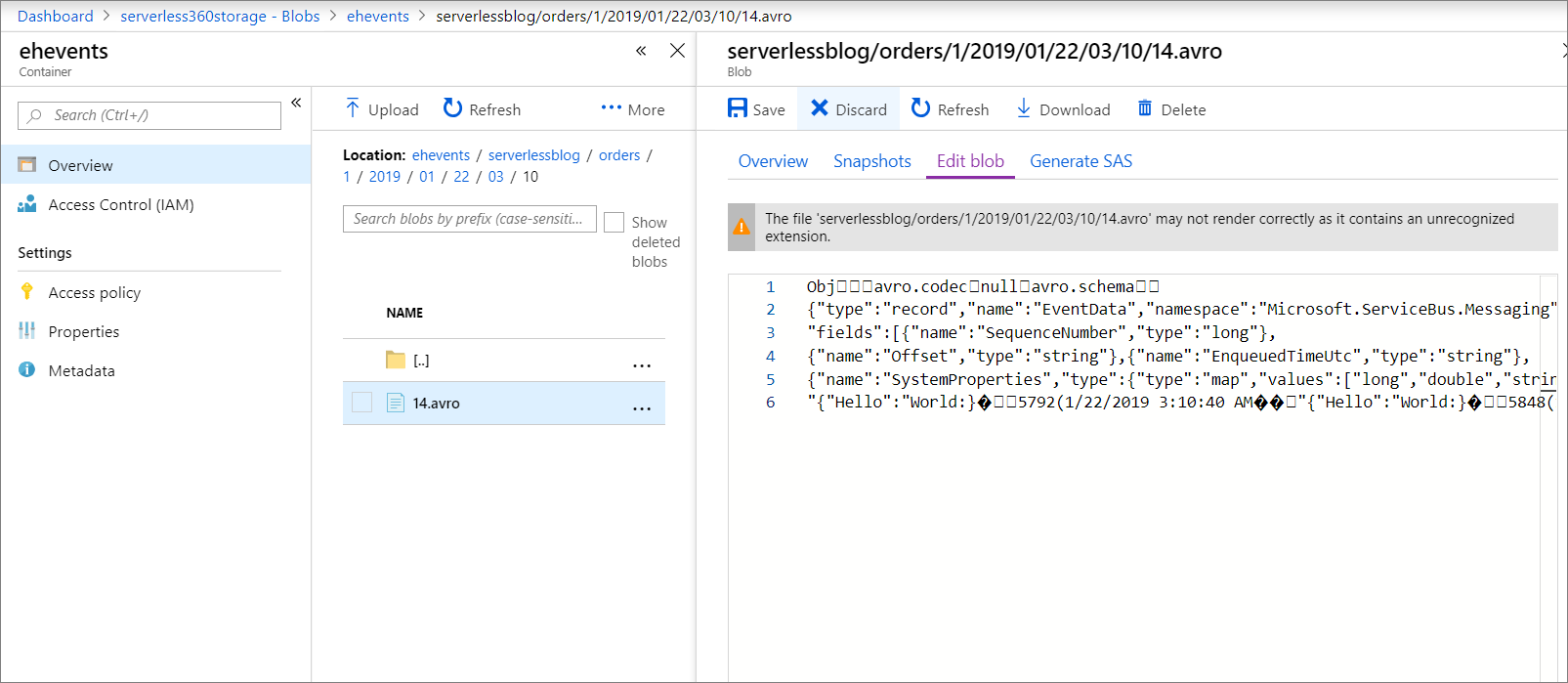

We will now send in some events to our Event Hub and wait for one of our window requirements to be satisfied. Once our events have been submitted, we will discover a new file has been written to Blob Storage. Our files will be written in Avro format and can be viewed in a text editor or using Apache Avro Tools.

Event Hubs provides out-of-box support for event data retention, spanning 1-7 days. However, when requirements require longer-term storage, or the ability to batch records, Capture can address these requirement by writing to either Azure Blob or Azure Data Lake Storage where downstream advanced analytics processes can consume this data.